The rapid progression of generative artificial intelligence has moved beyond the era of stochastic experimentation into a phase of rigorous industrial application. Within this context, the release of Google’s Nano Banana 2 on February 26, 2026, represents a significant milestone in the convergence of high-fidelity reasoning and high-throughput inference. This model, technically identified as Gemini 3.1 Flash Image, serves as the evolutionary successor to the original Nano Banana (Gemini 2.5 Flash Image) and the specialized Nano Banana Pro (Gemini 3 Pro Image). By analyzing the architectural discrepancies, performance benchmarks, and economic implications of these models, it becomes evident that Google is attempting to resolve the fundamental tension between generation latency and semantic precision.

1. Historical Trajectory and Branding Significance

The nomenclature “Nano Banana” originated within the Google DeepMind development teams as an internal codename for the Gemini 2.5 Flash Image model. The designation was initially intended to reflect the model’s lightweight, efficient nature—a “nano” footprint with a playful “banana” identifier. However, following its release in August 2025, the branding resonated with a global user base, particularly in high-growth AI markets such as India, where it attracted 13 million users in its first month. The subsequent decision to adopt this codename for public-facing branding signifies a shift in Google’s marketing strategy toward accessible, viral AI tooling.

By November 20, 2025, the release of Nano Banana Pro signaled an expansion into the professional creative sector. While the original model focused on speed for casual ideation, the Pro variant utilized the Gemini 3 Pro architecture to introduce “Thinking Mode,” advanced text rendering, and high-fidelity 4K output. The debut of Nano Banana 2 in early 2026 represents the most current iteration of this lineage, attempting to synthesize the professional capabilities of the Pro model with the speed of the Flash architecture.

2. Architectural Foundations of the Gemini 3 Series

The primary technical distinction between Nano Banana 2 and Nano Banana Pro lies in their underlying foundation models. Nano Banana Pro is constructed upon the Gemini 3 Pro Image architecture, which prioritizes complex multimodal reasoning and deep compositional planning. Conversely, Nano Banana 2 is built on the Gemini 3.1 Flash Image architecture, a newer framework optimized for rapid inference without significantly degrading the semantic quality achieved by its predecessor.

Detailed Model Specification Matrix

The following table provides a comprehensive technical comparison of the three primary models in the Nano Banana family, illustrating the trade-offs in resolution, speed, and architectural depth.

| Metric | Nano Banana (Original) | Nano Banana Pro | Nano Banana 2 |

|---|---|---|---|

| Foundation Model | Gemini 2.5 Flash Image | Gemini 3 Pro Image | Gemini 3.1 Flash Image |

| API Model ID | gemini-2.5-flash-image | gemini-3-pro-image-preview | gemini-3.1-flash-image-preview |

| Release Date | August 2025 | November 20, 2025 | February 26, 2026 |

| Max Native Resolution | 1024px (1K) | 4096px (4K) | 4096px (4K) |

| Inference Time (1K) | ~3 Seconds | 8–12 Seconds | 4–6 Seconds |

| Context Window (In) | N/A | 65,536 Tokens | 131,072 Tokens |

| Context Window (Out) | N/A | 32,768 Tokens | 32,768 Tokens |

| Text Accuracy (Est.) | ~80% | ~94% | ~90–92% |

| Reference Image Limit | 4 Images | 14 Images | 14 Images |

A key technical advancement in Nano Banana 2 is the 128k input context window, which exceeds the 65k capacity of the initial Pro model. This expanded window allows developers to input more extensive stylistic guides or complex, multi-modal instructions during a single generation request. Furthermore, Nano Banana 2 supports an extremely wide range of resolution tiers, including a 512px low-resolution option designed specifically for rapid prototyping and iterative design loops.

3. Multimodal Reasoning and the Thinking Mode Paradigm

The most distinctive feature of the Gemini 3-based models is the integration of “Thinking Mode,” a technical framework that applies chain-of-thought reasoning to the image synthesis process. Traditional generative models often function as single-pass rasterizers, mapping text tokens directly to latent space representations. In contrast, models supporting Thinking Mode engage in a multi-stage internal process before producing the final pixels.

The Mechanism of Visual Reasoning

When Thinking Mode is enabled via the include_thoughts API parameter, the model generates interim “thought images” or draft compositions. These drafts are used to test the layout, spatial logic, and lighting of a scene. For example, when prompted to create a complex infographic, the model might first “reason” about the hierarchy of information and the placement of labels before rendering the final high-resolution assets.

The implementation of Thinking Mode in Nano Banana 2 is optimized for “Flash speeds,” meaning it can execute these reasoning steps in roughly 4 to 6 seconds, compared to the 10 to 20 seconds required by the Pro model. This speed differential is critical for real-time applications where users require rapid feedback during a creative session. Developers can configure a thinking_budget_tokens parameter to dictate how much computational effort the model should expend on this reasoning process, allowing for a customizable balance between quality and speed.

4. Precision Text Rendering and Linguistic Translation

Historically, generative image models have struggled with the accurate depiction of legible text, often producing “hallucinated” characters or inconsistent typography. Nano Banana Pro achieved a significant breakthrough by reaching approximately 94% text rendering accuracy, making it viable for professional marketing mockups and signage. Nano Banana 2 maintains a high standard in this regard, with an estimated accuracy of 90% to 92%.

Localization and Global Campaign Management

Beyond mere legibility, Nano Banana 2 supports native text translation and localization directly within the image canvas. This feature allows enterprises to generate a single “hero” asset in one language and immediately produce localized variants for dozens of different regions. This capability is powered by the underlying Gemini 3.1 architecture, which possesses advanced cross-lingual understanding.

Data indicates that Nano Banana 2 is particularly proficient in rendering complex scripts. For instance, in benchmark tests involving the rendering of classical Chinese text (such as “Chibi Fu”), Nano Banana 2 demonstrated higher accuracy than the Pro variant, suggesting that the newer Gemini 3.1 architecture has benefited from more refined linguistic training sets.

5. Search Grounding and Real-World Knowledge Integration

One of the most disruptive aspects of the Nano Banana ecosystem is its ability to ground generated imagery in real-time information obtained through Google Search. This “Search Grounding” feature allows the model to verify factual details before rendering an image, ensuring that visuals are based on reality rather than just probabilistic patterns found in the training data.

Implications for Data Visualization and Accuracy

Search grounding enables several advanced use cases:

- Real-Time Infographics: The model can pull today’s weather forecast or the latest sports scores to create data-driven visualizations that are factually current.

- Product and Brand Accuracy: By accessing Google Image Search, the model can more accurately render specific products, logos, or celebrity likenesses that may have changed since its last knowledge cutoff in January 2025.

- Scientific and Technical Illustration: Users can prompt the model to find the latest NASA data on Mars and generate an educational poster that incorporates the specific findings and imagery from that search.

This integration transforms the image generator from a creative tool into an informational one, where the output is a synthesis of creative design and verified data.

6. Subject Consistency and Character Preservation

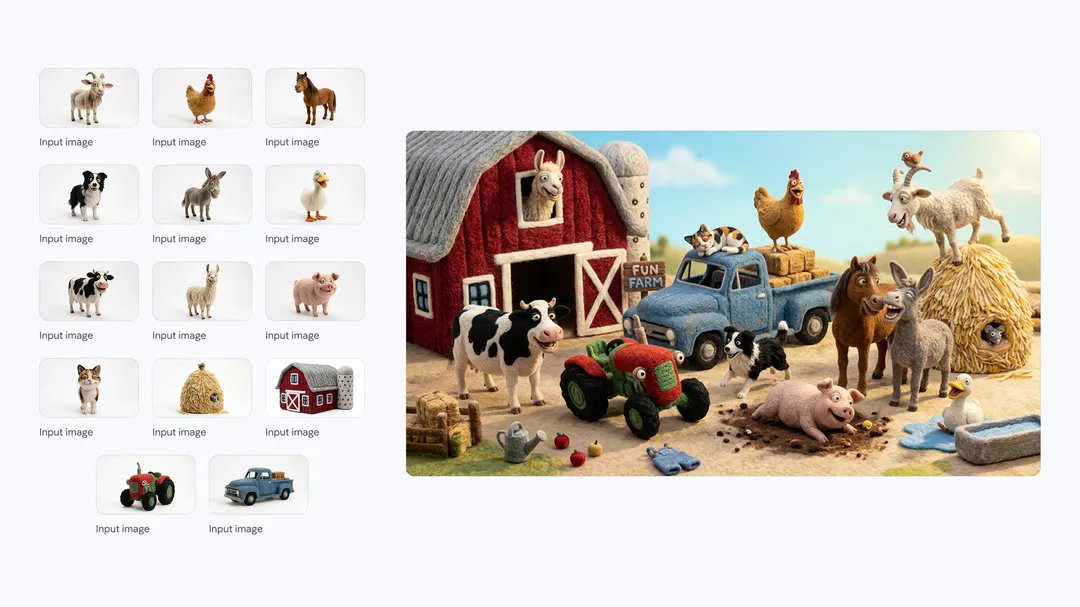

Maintaining visual consistency across multiple generations—a concept known as “subject preservation”—is essential for storytelling, storyboarding, and brand marketing. Nano Banana 2 introduces significant upgrades in this area, allowing creators to maintain the appearance of specific characters or objects across various scenes without the “drifting” common in earlier models.

Consistency Thresholds and Benchmarks

The model is designed to handle multiple subjects simultaneously while preserving high fidelity.

| Consistency Category | Threshold Limit | Application |

|---|---|---|

| Character Consistency | Up to 5 People | Storyboarding and visual narratives.3 |

| Object Fidelity | Up to 14 Objects | Product showcases and instructional diagrams.3 |

| Reference Images | Up to 14 Inputs | Brand style guides and character turnarounds.8 |

For professional creators, this means that a single character can be placed in diverse environments—such as a kitchen, a spaceship, and a mountain peak—while maintaining the same facial features, clothing details, and overall aesthetic. This consistency is achieved through an “expanded visual context window,” which acts as a form of “few-shot prompting” for visual styles.

7. Economic Analysis and API Tokenization

The move from Nano Banana Pro to Nano Banana 2 represents a significant shift in the cost-performance ratio for developers. The pricing for these models is structured around token consumption, where image outputs are charged based on their resolution.

Comparative Pricing Table (PayGo Rates)

| Resolution | Nano Banana 2 Cost | Nano Banana Pro Cost |

|---|---|---|

| 512px | ~$0.022 | N/A |

| 1K (1024px) | ~$0.034 | ~$0.134 |

| 2K (2048px) | ~$0.050 | ~$0.134 |

| 4K (4096px) | ~$0.076 | ~$0.240 |

The data demonstrates that for a standard 1K resolution image, Nano Banana 2 is approximately 75% cheaper than Nano Banana Pro. This price reduction is a direct result of the Gemini 3.1 Flash architecture’s efficiency. Furthermore, for high-volume batch processing, developers can access even lower rates through “Flex PayGo” and “Batch Prediction” tiers, which often reduce these costs by another 50%.

8. The Alignment-Utility Conflict: Censorship and Guardrails

The rollout of Nano Banana 2 has not been without controversy, particularly regarding its safety filters and content moderation policies. User reports on community forums such as Reddit indicate a significant increase in the frequency of “blocked” prompts compared to the earlier Pro model.

The “UI Catch-22” and Filtering Issues

A primary point of frustration for power users is what has been described as a “UI catch-22” in the Gemini app interface. Because Nano Banana 2 is the default model, it acts as the initial gatekeeper for all prompts. If the Nano Banana 2 safety filter deems a prompt “unsafe”—even if it is a false positive—the user is never given the option to “Redo with Pro,” effectively locking them out of the more sophisticated model for that specific query.

Reports of false positives include:

- Depiction of Adults: Prompts describing adults in mundane activities (e.g., a 25-year-old character at the beach) are sometimes blocked by filters intended to protect minors.

- Action Scenes: Dynamic prompts involving “gritty” or “cyber-ninja” themes are more frequently flagged as violent in the newer model than in the Pro version.

- Aesthetic Degradation: Some users have observed that the increased focus on safety and “alignment” has resulted in images that look “weirdly plastic” or “flat,” with a loss of the realistic skin textures that characterized Nano Banana Pro.

These issues suggest a growing tension between Google’s corporate safety mandates and the creative freedom required by professional users. This has led to some segment of the user base seeking alternative platforms with less restrictive guardrails for niche art styles.

9. Deployment and Platform Integration

Google has integrated the Nano Banana 2 model into a wide array of existing services, making it one of the most widely available generative image models in the world.

Ecosystem Reach

- Gemini App: Replaces Nano Banana Pro as the default for all modes (Fast, Thinking, and Pro).

- Search and Lens: Enabled in AI Mode for Search and Google Lens across 141 countries and 8 additional languages.

- Google Workspace: Integrated into Google Slides and Vids to assist in presentation design and creative asset generation.

- Creative Tools (Flow): Nano Banana 2 is the default model in Flow, available for zero credits to help with storyboard creation.

- Developer API (Vertex AI): Available in preview with support for Provisioned Throughput and detailed token-based billing.

The model also supports the latest provenance standards, incorporating SynthID digital watermarking and C2PA Content Credentials. This ensures that every image generated can be identified as AI-derived, a critical requirement for enterprise adoption in the current regulatory environment.

10. Comparative Utility: When to Deploy Nano Banana 2 vs. Nano Banana Pro

While Nano Banana 2 is marketed as the new standard, the Pro model remains relevant for specialized tasks. Strategic decision-making for deployment should be based on the specific requirements of the creative workflow.

Deployment Strategy Matrix

| Use Case Scenario | Recommended Model | Reasoning |

|---|---|---|

| Rapid Ideation / Prototyping | Nano Banana 2 | 4–6 second speed and 512px low-cost tier allow for hundreds of iterations at minimal expense. |

| High-Volume Social Media | Nano Banana 2 | Flash speed and lower API costs are optimized for scale and high-frequency posting. |

| Hero Assets / Print Quality | Nano Banana Pro | Superior lighting, 8K support (Ultra version), and deepest reasoning for flagship visuals. |

| Complex Multi-Step Editing | Nano Banana Pro | Better adherence to complex natural language instructions without masks or layers. |

| Factual / Data Infographics | Nano Banana Pro | Highest text accuracy (94%) and most sophisticated grounding logic. |

In professional environments, the most effective workflow utilizes Nano Banana 2 for the initial exploration and refinement phases to save time and budget. Once a final concept is selected, it can be “upgraded” or “regenerated” using Nano Banana Pro to ensure the highest possible fidelity and lighting accuracy for the final production asset.

11. Future Outlook and Strategic Implications

The trajectory from Nano Banana to Nano Banana 2 indicates that the industry is rapidly moving toward a future where generative media is deeply integrated into every facet of digital communication. The reduction in latency and cost provided by the Gemini 3.1 Flash architecture suggests that real-time, on-device image generation is becoming a near-term reality.

The success of the Nano Banana brand also highlights a shift in user expectations. Creators no longer just want a model that generates “cool pictures”; they want a tool that understands complex instructions, maintains character identity across a series of images, renders perfect text, and is grounded in the real world.10 As Google continues to iterate on this ecosystem, the boundary between “generative art” and “functional design” will likely continue to blur.

About Us

Based in Hong Kong, JoJo Ventures is a specialized production studio blending years of cinematic expertise with the power of CGI and AI. As the AI wave transforms the creative industry, we help businesses break through traditional production bottlenecks. Our mission is to provide more efficient, creative, and scalable ways for companies to communicate their vision.

Our work is trusted by global giants and local icons alike, including

- Pfizer

- Bosch

- Siemens

- Wellcome

- Eu Yan Sang

- SaSa

From premium commercials to the next generation of AI-generated visuals, we are your partners in the AI era.

Let’s build the future of your brand.

📧 Email: business@jojo.ventures

📱 WhatsApp: +852 9853 7469